MediaFire + Duplicity = Backup

Update (2016-02-23): The backend has been merged and is now part of official duplicity package. See this announcement for how to use it, there are important differences.

Update (2021-09-06): Backend was removed, see my post.

Foreword

Your backups must always be encrypted. Period. Use the longest passphrase you can remember. Always assume the worst and don't trust anybody saying that your online file storage is perfectly secure. Nothing is perfectly secure.

MediaFire is an evolving project, so my statements may not be applicable, say, in 3 months. However, I suggest you to err on the side of caution.

Here are the things you need to be aware of upfront security-wise:

There is no second factor authentication yet for MediaFire web interface.

The sessions created from your username and password have a very long lifespan. It is not possible to destroy a session unless you change a password.

The web interface browser part can leak your v1 session since the file viewer (e.g. picture preview or video player) is forcing the connection to run over plain HTTP due to mixed content issues.

All python-mediafire-open-sdk calls are made through HTTPS, but if you

are using MediaFire account in an untrusted environment of a coffee shop

WiFi you will want to use a VPN.

My primary use case for this service is an encrypted off-site backup of my computers, so I found these risks to be acceptable.

Once upon a time

I was involved in Ubuntu One, and when the file synchronization project closed, I was left without a fallback procedure. I continued with local disk backups, searching for something that had:

an API which is easy to use,

a low price for 100GB of data,

an ability to publish files and folders to a wider internet if needed,

no requirement for a daemon running on my machine,

a first-party Android client.

Having considered quite a few possibilities, I ended up intrigued by MediaFire, partially because they had API, and they seemingly had a Linux client to upload things (which I was never able to download from their website), but there was not much integration with other software on my favorite platform. They had a first year promo price of $25/year, so I started playing with their API, "Coalmine" project was born, initially for Python 3.

When I got to the point of uploading a file through an API, I decided to upgrade to a paid account which does not expire.

I became a frequent visitor on MediaFire Development forums where I reported bugs and asked for documentation updates. I started adding tests to my "Coalmine" project and at some point I included a link to my implementation on the developer forum and got contacted by MediaFire representative asking whether it would be OK for them to copy the repository into their own space, granting me all the development rights.

That's when "Coalmine" became mediafire-python-open-sdk

...

Oh, duplicity? Right...

Duplicity

A solid backup strategy was required. I knew about all the hurdles of file synchronization firsthand, so I wanted a dedicated backup solution. Duplicity fits perfectly.

Now, as I said, there were no MediaFire modules for Duplicity, so I ported the code to python 2 with

a couple of lines changed. I looked at the Backend class, and put the project aside, continuing

to upload gpg-encrypted backups of tar archives.

A few weeks ago I finally felt compelled to do something about Duplicity and found that implementing backend is way easier than it looked.

And now I have another project, duplicity-mediafire:

It is just a backend that expects MEDIAFIRE_EMAIL, MEDIAFIRE_PASSWORD environment variables

with MediaFire credentials.

It is not part of Duplicity and won't be proposed for inclusion until I am comfortable with

the quality of my mediafire layer.

Follow README for installation instructions. I am using the project with duplicity 0.6.25, so it may fail for you if you are using a different version. Please let me know about this via project issues.

I put my credentials in duplicity wrapper for now, since dealing with keystores is yet another can of worms.

#!/bin/sh MEDIAFIRE_EMAIL='mediafire@example.com' MEDIAFIRE_PASSWORD='this is a secret password' PASSPHRASE='much secret, wow' exec /usr/bin/duplicity "$@"

Now, I can run duplicity manually and even put it into cron:

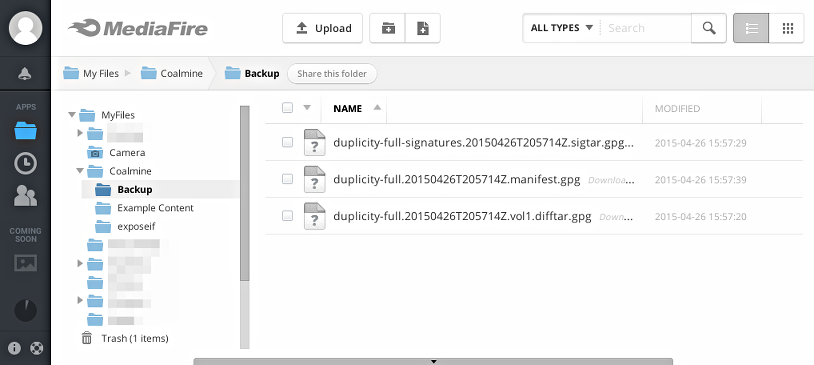

$ duplicity full tmp/logs/ mf://Coalmine/Backup Local and Remote metadata are synchronized, no sync needed. Last full backup date: none --------------[ Backup Statistics ]-------------- StartTime 1430081835.40 (Sun Apr 26 16:57:15 2015) EndTime 1430081835.48 (Sun Apr 26 16:57:15 2015) ElapsedTime 0.08 (0.08 seconds) SourceFiles 39 SourceFileSize 584742 (571 KB) NewFiles 39 NewFileSize 584742 (571 KB) DeletedFiles 0 ChangedFiles 0 ChangedFileSize 0 (0 bytes) ChangedDeltaSize 0 (0 bytes) DeltaEntries 39 RawDeltaSize 580646 (567 KB) TotalDestinationSizeChange 376914 (368 KB) Errors 0 ------------------------------------------------- $ duplicity list-current-files mf://Coalmine/Backup Local and Remote metadata are synchronized, no sync needed. Last full backup date: Sun Apr 26 16:57:14 2015 Fri Apr 24 21:31:53 2015 . Fri Apr 24 21:31:52 2015 access_log Fri Apr 24 21:31:52 2015 access_log.20150407.bz2 ... $ duplicity mf://Coalmine/Backup /tmp/logs Local and Remote metadata are synchronized, no sync needed. Last full backup date: Sun Apr 26 16:57:14 2015 $ ls -l /tmp/logs total 660 -rw-r--r--. 1 user user 5474 Apr 24 21:31 access_log -rw-r--r--. 1 user user 15906 Apr 24 21:31 access_log.20150407.bz2 -rw-r--r--. 1 user user 26885 Apr 24 21:31 access_log.20150408.bz2 ...

If you want to know what's happening during upload behind the scenes, you can uncomment the block:

# import logging # # logging.basicConfig() # logging.getLogger('mediafire.uploader').setLevel(logging.DEBUG)

This is an early version of the backend, so in case it takes too long to perform an upload and no network activity is seen, you may want to terminate the process and rerun the command.

FILE SELECTION

This is a section in duplicity(1) where you need to look if you want to do a multiple directory backup.

Now, I've spent half an hour trying to find out how I can backup only the things I want, so here's how:

Create a duplicity.filelist e.g. in ~/.config, put the following there:

Now run duplicity with --include-globbing-filelist

~/.config/duplicity.filelist in dry-run mode and you'll get something like

this (note that output is fake, so don't try to match the numbers):

$ duplicity --dry-run --include-globbing-filelist ~/.config/duplicity.filelist \ /home/user file://tmp/dry-run --no-encryption -v info Using archive dir: /home/user/.cache/duplicity/f4f89c3e786d652ca77a73fbec1e2fea Using backup name: f4f89c3e786d652ca77a73fbec1e2fea ... Import of duplicity.backends.mediafirebackend Succeeded ... Reading globbing filelist /home/user/.config/duplicity.filelist ... A . A .config A .config/blah A bin A bin/duplicity A Documents A Documents/README --------------[ Backup Statistics ]-------------- StartTime 1430102250.91 (Sun Apr 26 22:37:30 2015) EndTime 1430102251.46 (Sun Apr 26 22:37:31 2015) ElapsedTime 0.55 (0.55 seconds) SourceFiles 6 SourceFileSize 1234 (1 MB) ...

- excludes the entry from file list, while simply listing the item includes it. This makes it possible

to use whitelisting a few nodes instead of blacklisting a ton of unrelated files.

In my case I run:

$ duplicity --include-globbing-filelist ~/.config/duplicity.filelist /home/user mf://Coalmine/duplicity/

And this is how I do off-site backups.

P.S. If you wonder why I decided to use a passphrase instead of GPG key - I want a key to be memorizable and in case I lose access to my GPG key, I will still most likely be able to recover the files.