Testing Libvirt Over TLS

libvirt is a technology by RedHat that implements a single interface for different types of virtualization methods. Personally I am using it for kvm/qemu and LXC but the list on their website is much larger and includes UML), Xen, OpenVZ and even VMWare ESX and GSX hypervisors.

If you are using KVM directly, you will construct the command line manually and will eventually end up with something like this:

/usr/bin/kvm -S -M pc-1.0 -cpu qemu32 -enable-kvm -m 1024 \ -smp 4,sockets=4,cores=1,threads=1 \ -name plum -uuid 1da8c95b-0586-d41a-8c64-760034aa2453 \ -nodefconfig -nodefaults \ -chardev socket,id=charmonitor,path=/var/lib/libvirt/qemu/plum.monitor,server,nowait \ -mon chardev=charmonitor,id=monitor,mode=control -rtc base=utc -no-shutdown \ -drive file=/dev/vg0/vm-nfs-test,if=none,id=drive-virtio-disk0,format=raw\ ...

I've been there and wrote scripts to help maintaining running kvm instances. For a small number of options that's OK. But interacting with the instances through qemu monitor quickly became very tedious.

When I found out about libvirt I was really excited. Well, I am still excited :)

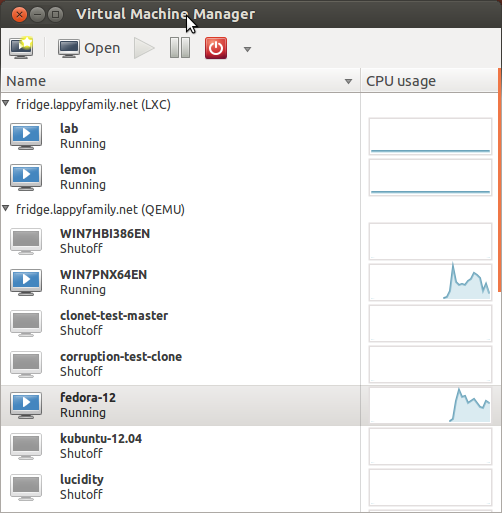

The most user-visible part of libvirt is virt-manager which provides the GUI for libvirt API. It looks like this:

It allows creating, reconfiguring, cloning and deleting virtual machines and storage, configure virtual networks and control instance power states – almost everything you need.

Another client is a CLI application called virsh:

virsh # list Id Name State ---------------------------------- 4 plum running 5 WIN7PNX64EN running 6 fedora-12 running

It allows modifying the VM description directly (that's an XML file), managing the storage pool... Well, pretty much everything that virt-manager does and a little bit more, such as snapshotting the VM (warning: I am currently investigating the corruption happening with qcow2 disks but have not yet obtained any results).

libvirt is a client library. The server side is implemented by libvirtd. libvirtd can be connected to using Unix domain sockets or remotely via TCP.

To make contacting virtual hosts easier, the clients can connect to remote server

through SSH. Basically client creates a SSH session, starts netcat on the

remote end and makes it relay the commands from the local end to the Unix

domain socket, in this case you will see the following in the process list on

the server:

sh -c if nc -q 2>&1 | grep "requires an argument" >/dev/null 2>&1; then \ ARG=-q0;else ARG=;fi;nc $ARG -U /var/run/libvirt/libvirt-sock

The grep is needed because there are 2 versions of netcat in existence, netcat-openbsd and netcat-traditional, each with a bit different options.

The user account on the server should have access to

/var/run/libvirt/libvirt-sock file. The easiest thing to make this on

Ubuntu is to add the user account to libvirtd group. In Fedora it is

customary to use root account and allow root to log in via SSH (which I will

[never](/blog/2009/06/permitrootlogin-yes-is-default-value/) do again).

For remote virtualization servers the SSH access has another benefit, you can use VNC console without exposing the ports to an outside world. In this case upon starting the virt-viewer or using graphical console from virt-manager a new SSH connection is being created with...

sh -c nc -q 2>&1 | grep "requires an argument" >/dev/null; \ if [ $? -eq 0 ] ; then CMD="nc -q 0 127.0.0.1 5906"; \ else CMD="nc 127.0.0.1 5906";fi;eval "$CMD";

That allows kvm to listen on loopback interface only, while still being accessible to somebody with a shell account on the VM server.

Now this is all nice but using SSH has one drawback, it requires a shell account on the server which may not always be good. Additionally every request to virsh requires the SSH session to be set up and then torn down which adds to the total time of virsh command running. And when I got 10 virtual machines, volume listing in virt-manager became really slow, which I attributed completely to the SSH session. So I decided to set up everything for TLS connection and compare the speed of qemu+tls vs qemu+ssh.

I am not using SASL authentication and rely on TLS certificates only.

So, connecting to TLS libvirtd faster? Yes. Is virt-manager working faster? No.

First of all I followed the detailed instruction

at libvirt wiki,

creating all the necessary keys and certificates. Since I wanted only one

account to become administrative one and not the whole host (even though I am

the only user on the laptop), I copied

the clientcert.pem, clientkey.pem and cacert.pem to ~/.pki/libvirt/.

Then I added -l to the list of libvirtd options on the server in

/etc/default/libvirt-bin and restarted the service:

$ time virsh -c qemu+ssh://fridge.lappyfamily.net/system list Id Name State ---------------------------------- 4 plum running 6 fedora-12 running real 0m0.798s user 0m0.040s sys 0m0.012s $ time virsh -c qemu://fridge.lappyfamily.net/system list Id Name State ---------------------------------- 4 plum running 6 fedora-12 running real 0m0.134s user 0m0.052s sys 0m0.008s

Yes! It is indeed faster! (qemu+tls:// is the same as qemu:// alone)

Checking virt-manager proved that while connecting is indeed faster, all other operations are carried over an existing ssh connection and take exactly the same amount of time, and that needs to be investigated in the code.

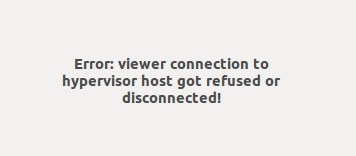

Remember that VNC connection is also proxied via SSH? VNC is gone for virt-manager as it relies on proxying. No SSH - no VNC.

You can get VNC console back if you reconfigure the VNC server to listen on all interfaces. While virt-manager still can't connect to the graphical console, virt-viewer works perfectly.

So, having performed all these steps, I can say it was not really worth it but I will definitely continue using the TLS connection because of initial speed-up. You will want to use TLS connections under very particular circumstances where using SSH may add an additional point of failure (such as automated provisioning of virtual machines) or when you don't want to expose SSH to an external world and still be able to connect to libvirtd (with TLS your traffic is both encrypted and authenticated using SSL). Using SASL will allow to have libvirt-specific accounts separate from your server accounts and that may be useful sometimes too.

Serial console

Now my remote libvirt server is accessible via SSH and TLS socket and, since VNC is not available, I started to look into making the linux virtual machines log to serial console as it is accessible over TLS socket. That's actually quite easy for kernel and getty on Ubuntu, haven't found a way to make grub cooperate yet:

Edit /etc/default/grub and alter the GRUB_CMDLINE_LINUX_DEFAULT to contain

the consoles you want the boot messages to appear on:

Run update-grub for the changes to take effect. This command will combine

all the files needed to bring grub up and write /boot/grub/grub.cfg file.

Never modify that file directly because the changes will be overwritten.

Now we run a login session on ttyS0 with this

/etc/init/ttyS0.conf upstart file:

# ttyS0 - getty # # This service maintains a getty on tty1 from the point the system is # started until it is shut down again. start on stopped rc RUNLEVEL=[2345] and ( not-container or container CONTAINER=lxc or container CONTAINER=lxc-libvirt) stop on runlevel [!2345] respawn exec /sbin/getty -8 38400 ttyS0

start ttyS0 to make console appear and run virsh console:

$ virsh console plum Connected to domain plum Escape character is ^] *press <Enter>* Ubuntu 12.04 LTS plum ttyS0 plum login:

Upon reboot the console will have all the info the kernel prints out.

$ virsh console plum Connected to domain plum Escape character is ^] Ubuntu 12.04 LTS plum ttyS0 plum login: rtg Password: [...] rtg@plum:~$ sudo reboot [...] * Will now restart [ 507.815197] Restarting system. [ 0.000000] Initializing cgroup subsys cpuset [ 0.000000] Initializing cgroup subsys cpu [ 0.000000] Linux version 3.2.0-24-generic-pae (buildd@vernadsky) (gcc version 4.6.3 (Ubuntu/Linaro 4.6.3-1ubuntu5) ) #37-Ubuntu SMP Wed Apr 25 10:47:59 UTC 2012 (Ubuntu 3.2.0-24.37-generic-pae 3.2.14) [...]

Nice, huh?