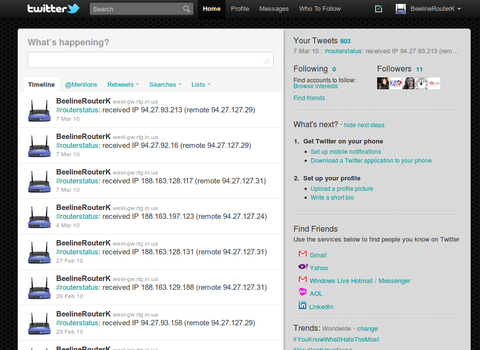

Beeline Home Internet has started providing IPTV through its LAN. The

description of the service is given here.

Since this was my first experience with multicasting I had no clue about what

to do, where to get the traffic from and how I can forward it to my LAN.

It turned out to be pretty easy, unfortunately requiring kernel .config

adjusting even in the latest OpenWRT trunk. Please note, I am using brcm-2.4

kernel/

Index: trunk/target/linux/brcm-2.4/config-default

===================================================================

--- trunk/target/linux/brcm-2.4/config-default (revision 16833)

+++ trunk/target/linux/brcm-2.4/config-default (working copy)

@@ -373,3 +373,24 @@

# CONFIG_WDTPCI is not set

# CONFIG_WINBOND_840 is not set

# CONFIG_YAM is not set

+CONFIG_IP_MROUTE=y

+CONFIG_IP_PIMSM_V1=y

+CONFIG_IP_PIMSM_V2=y

PIMSM* options are not necessary but I included them to be on a safe side.

Then we need to build the firmware with or add igmpproxy to the already

installed firmware.

After this configure igmpproxy in /etc/igmpproxy.conf:

quickleave

phyint eth0.1 upstream ratelimit 0 threshold 1

altnet 192.168.0.1

phyint br-lan downstream ratelimit 0 threshold 1

phyint ppp0 disabled

phyint lo disabled

You can read about my network setup here.

The IP address for altnet is required to allow the packets from these networks

to be routed. The LAN address is 10.0.0.0/8 and IPTV is being broadcasted from

192.168.0.1 in my case. That's why such entry is required. You can also find

out the source address by running tcpdump. After your host joins the group

(igmpproxy should be already running) you will see a large number of packets

going to some multicast address (say 225.225.225.1).

You can start igmpproxy so that it does not go to background, with -d switch.

Having found the source ip, you need to add it to your firewall. For one-time

include, do this directly:

iptables -A forwarding_wan -s $source_ip -d 224.0.0.0/4 -j ACCEPT

For long-term solution, add this to /etc/config/firewall:

config rule

option src wan

option proto udp

option src_ip 192.168.0.1

option dest lan

option dest_ip 224.0.0.0/4

option target ACCEPT

You should be ready to start receiving multicasts now from 192.168.0.1. Start

VLC and point it to the IP address, say 225.225.225.1 and you should get a

picture.

You will also need to add additional firewall rule so that the stream will not

stop suddenly. This happens because your ISP gateway (10.22.234.1 in my case)

sends subscription queries to your router. These queries will be blocked by

default. In order to prevent this, check your gateway IP and add the following

rule

config 'rule'

option 'src' 'wan'

option 'proto' 'igmp'

option 'src_ip' '10.22.234.1'

option 'target' 'ACCEPT'

to /etc/config/firewall

My network setup had one major drawback. My wlan and ethernet are bridged

together so I get two networks connected. It was done to share the address

space without any additional tricks for firewall and routing. Now this means

that even if I start receiving the IPTV signal via the wire, the WiFi network

is flooded as well rendering our laptops completely unusable because of the

WiFi cards being extremely busy receiving the packets. The router is sending

packets fine, though.

This can be made to work properly by creating new vlan out of existing

configuration, creating corresponding vlan interfaces, firewall zones and

adjusting igmpproxy accordingly. In case you want to get info on how to make

this, feel free to comment and I will describe my current setup completely.

Finally, if the client runs Linux, tcpdumps shows that UDP data is flowing but

the client does not want to cooperate, check what is the value of the following

sysctl:

sysctl net.ipv4.conf.$interface.rp_filter

If it is set to 1 (true) the packets will be filtered by the kernel as their

source interface will not match the expected one (see RFC1812, item 5.3.8).

This can be fixed the following way:

sysctl net.ipv4.conf.$interface.rp_filter=0

Feel free to comment the post if you need any additional information.